My research focuses on utilising machine learning techniques to extract insightful representations of large and complex data coming from heterogeneous data streams (wearables, cameras, LiDAR) embedded in intelligent systems (e.g. robots, drones, smart homes). The aim is mostly to achieve a better understanding of the environments where these sensors, as well as the whole system, are deployed; and to maximise the reward / return of the task of interests (e.g. robot exploration in human-centered environments is to maximise human-robot interactions).

I am currently working in collaboration with KV Hephaestus Defence Ltd. The project can be summarised in one sentence as:

This six-month project will deliver a validated simulation environment for KV’s UAV, benchmarked baseline navigation/SLAM performance, and a structured feasibility-and-risk assessment of candidate sensing modalities (including their limitations and implications for future sensor-fusion). These outputs will be packaged as the technical evidence base for subsequent grant proposals, which will extend the work from single-UAV autonomy to coordinated multi-drone operations and real-time decision-making informed by both onboard UAV sensing and complementary inputs from distributed environmental sensor stations.

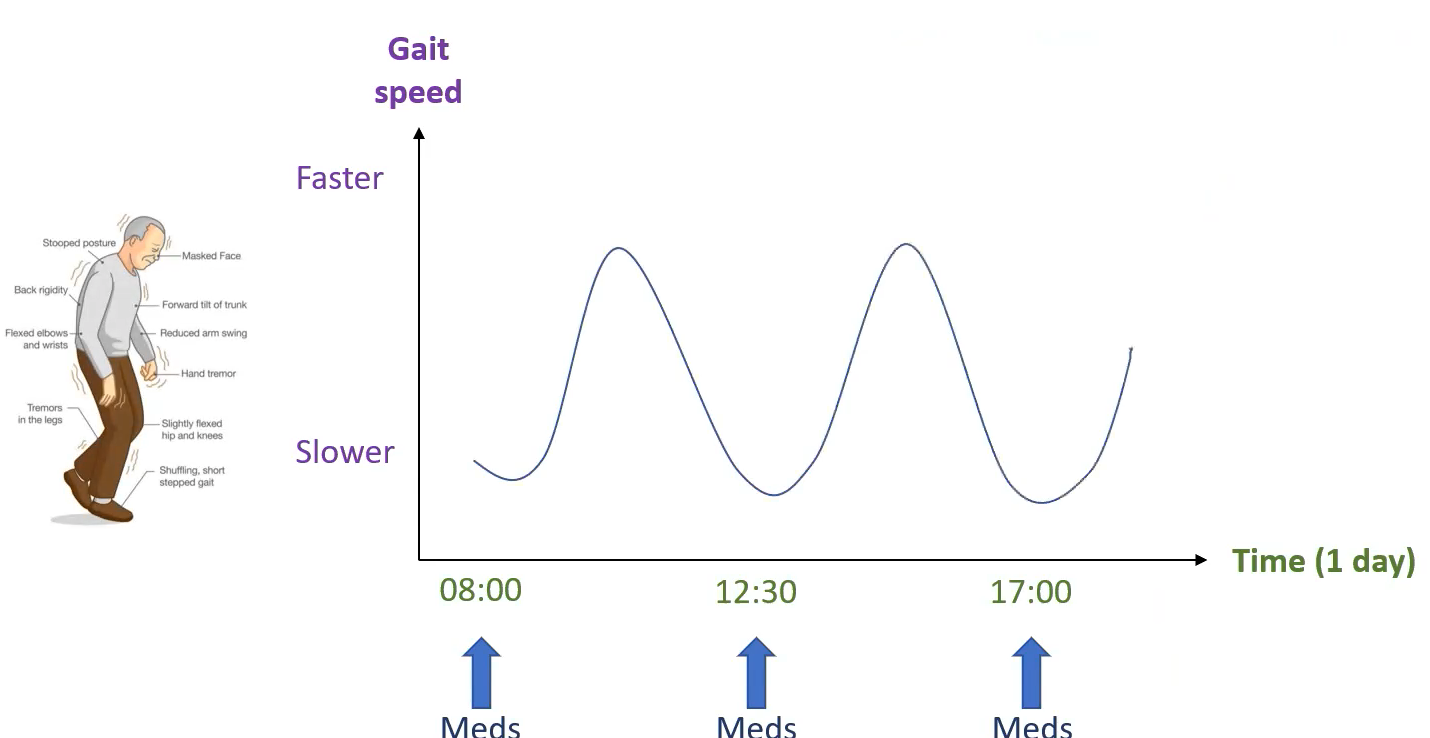

The vision of the EPSRC-funded SPHERE IRC is to impact a range of healthcare needs by employing data-fusion and pattern-recognition from a common platform of sensors in the home. One application is to continuously monitor the progression of Parkinson’s disease more accurately, without being afraid of hour/daily fluctuations. PD-SENSORS project initiates the first step towards autonomous evaluations of persons with Parkinson’s disease using multi-sensory data from SPHERE houses.

The vision of the EPSRC-funded SPHERE IRC is to impact a range of healthcare needs by employing data-fusion and pattern-recognition from a common platform of sensors in the home. One application is to continuously monitor the progression of Parkinson’s disease more accurately, without being afraid of hour/daily fluctuations. PD-SENSORS project initiates the first step towards autonomous evaluations of persons with Parkinson’s disease using multi-sensory data from SPHERE houses.

MIMRee (Multi-Platform Inspection, Maintenance & Repair in Extreme Environments) is a £4.2m project funded by Innovate UK and industry and focuses on building the world’s first fully autonomous and comprehensive multi-robotic platform for the inspection, maintenance and repair of offshore wind farms. In this project, I was involved in creating a planning and coordination technology for the various robotic assets, which include drones, an autonomous surface vessel, a climbing inspection and maintenance robot and multi-functional arm. I am also responsible for the human-robot interface.

Prometheus is a £2m project funded by Innovate UK and industry and focuses on building an intelligent reconfigurable drone for the exploration of confined spaces, such as underground mines. In this project, I was involved in co-creating the intelligent exploration and autonomous navigation of the drone in confined spaces.

STRANDS will produce intelligent mobile robots that are able to run for months in dynamic human environments. The project aims to provide robots with the longevity and behavioural robustness necessary to make them truly useful assistants in a wide range of domains. Such long-lived robots will be able to learn from a wider range of experiences than has previously been possible, creating a whole new generation of autonomous systems able to extract and exploit the structure in their worlds. In this project, I was responsible in creating statistical models that can capture reliably and summarize the aggregate human occupancy behaviour through various data streams. The models were used to drive task planning technology towards prioritising tasks that maximise robot learning and human-robot interactions.

STRANDS will produce intelligent mobile robots that are able to run for months in dynamic human environments. The project aims to provide robots with the longevity and behavioural robustness necessary to make them truly useful assistants in a wide range of domains. Such long-lived robots will be able to learn from a wider range of experiences than has previously been possible, creating a whole new generation of autonomous systems able to extract and exploit the structure in their worlds. In this project, I was responsible in creating statistical models that can capture reliably and summarize the aggregate human occupancy behaviour through various data streams. The models were used to drive task planning technology towards prioritising tasks that maximise robot learning and human-robot interactions.